AI Agent Hosting: Why Privacy Matters in the Era of AI Expansion

In a world increasingly powered by intelligent algorithms, AI agents represent powerful tools driving transformation across industries—from healthcare innovations to next-gen customer services and precise data analytics. With the AI wave comes an intensifying demand for private and secure solutions.

Recent high-profile cybersecurity breaches, coupled with elevated consumer awareness about data privacy and tightening regulations, have shifted the spotlight toward secure AI agent hosting solutions. Businesses today must ensure they choose a robust and privacy-oriented environment to host their AI agents. Companies cannot afford lax security—data breaches now result not only in financial and reputational damage but also severe regulatory penalties. Technological giants have faced challenges balancing AI progress with ethical considerations and user privacy. Consequently, hosting private AI agents securely has become an essential strategic move. Business leaders in IT and operations increasingly realise the critical relationship between hosting solutions, compliance, and long-term operational efficiency.

Hosting AI agents securely goes beyond typical automation and digital workflows. AI-powered technology, notably Large Language Models (LLMs), requires substantial processing power, precise compliance management, and strict data handling. It's imperative to choose a hosting environment that provides scalability, strong security, and reliability suited uniquely to advanced AI tasks.

Key Question for Businesses Using AI Agents:

How well protected is your AI infrastructure? Read on to compare 5 of the top hosting options and understand their benefits, risks, and suitability for your strategic objectives.

A Quick Glance at Key Decision Factors

Critical considerations include:

- security & compliance

- cost-effectiveness

- scalability & flexibility

- integration & management overhead

- level of control & vendor reliance

Explore these decision factors in detail

So what are your options for AI agent hosting?

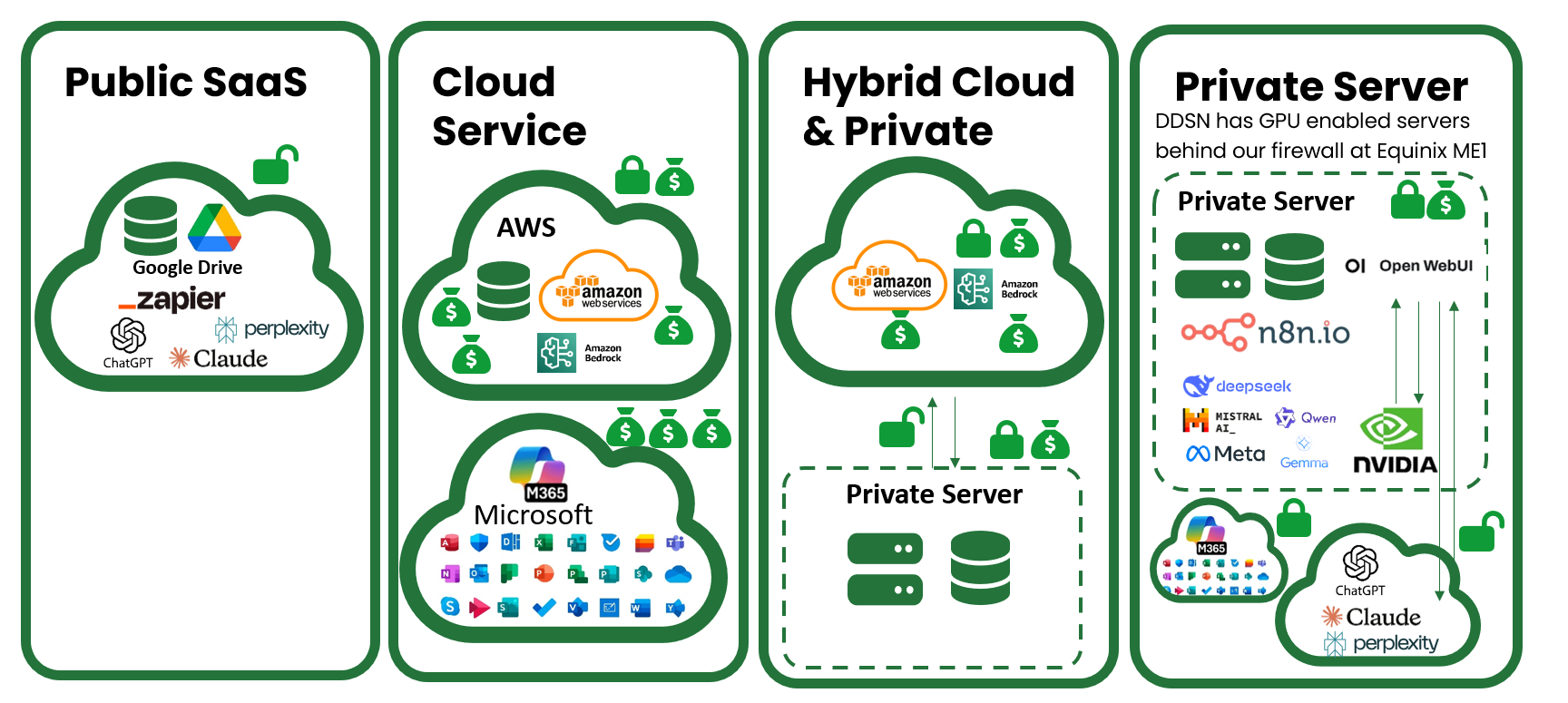

Graphical depiction of hosting options when running AI agents. Lock indicates level of security and money bags... well that should speak for itself.

Graphical depiction of hosting options when running AI agents. Lock indicates level of security and money bags... well that should speak for itself.

There are a large number of options, it can feel overwhelming. Here are 6 of the most common architectures. Each links to a detailed discussion below.

- SaaS Stack - e.g. Google Cloud with and Public LLM

- Major Public Cloud Providers (Azure, AWS)

- Niche AI-Specialised Cloud Hosting Providers

- Fully Managed Private Cloud Hosting for AI Agents

- Hybrid Cloud Hosting

- On-Premises Private Infrastructure

DDSN work and deliver projects across all 5 options. We choose option 4 for ourselves and value the security of our business intelligence and flexibility to ask the AI to do anything without privacy concerns for our, or our client data. The most important thing to do when making a choice is to understand the risk & the risk appetite of your organisation and go from there.

Option 1: SaaS Stack - e.g. Google Cloud with and Public LLM

In this option you are choosing combined Software-as-a-Service (SaaS) tools & offerings, these are cloud hosted internationally and typically have a monthly subscription cost or fee for use.

The example depicted above uses Google Cloud Platform combined with SaaS tools and public large language models and ait is well described in the recent book by Candice DeVille - AI-Ready Playbook . This approach is streamlined and allows you to leverage readily available, proven AI capabilities without building extensive custom infrastructure. This model prioritises rapid deployment and ease of use, utilising Google's established AI models like Gemini alongside their cloud services and integration with various SaaS productivity and development tools. It's particularly attractive for organisations wanting to quickly implement AI agent capabilities without significant technical investment.

Benefits:

- Rapid deployment with access to the lastest powerful public AI models

- Minimal upfront resource investment, ideal for rapid experimentation

- Simple setup & integration with a large number of tools and workflows easily

Concerns:

- Possibly insufficient customisation and limited control

- Increased risk for sensitive applications due to security gaps

- Dependence on external services could expose unexpected complexity

- Potential issues in compliance with Australian Privacy Principles (APP)

Benefits of using SaaS stacks for AI hosting

Rapid deployment with access to proven public AI models

Choosing Google Cloud coupled with established SaaS tools and public LLMs eliminates the lengthy setup and configuration phases typically required for AI infrastructure. You can begin experimenting with AI agents within days rather than months, leveraging models that Google has already trained and optimised using massive datasets and computational resources. These public models represent billions of dollars in research and development investment, providing sophisticated natural language understanding, reasoning capabilities, and broad knowledge that would be impossible to replicate independently. The SaaS integrations available through the public hosting mean your AI agents can immediately connect to familiar tools like Google Workspace, enabling rapid deployment of practical AI capabilities that integrate with your team's existing workflows. This speed to value can be particularly important when exploring AI use cases, validating business models, or responding quickly to competitive pressures that require immediate AI capabilities.

Minimal upfront resource investment ideal for rapid experimentation

The SaaS and public LLM combination requires minimal initial financial commitment—you pay only for what you use without purchasing hardware, building infrastructure, or making long-term capacity commitments. This low barrier to entry makes it practical to experiment with AI agent applications without securing substantial budget approval or justifying large capital expenditures. For proof-of-concept projects, initial AI explorations, or testing whether AI agents can address specific business challenges, this minimal investment approach allows you to fail fast and inexpensively if an approach doesn't work. The financial flexibility also means you can run multiple parallel experiments, testing different AI agent configurations or use cases simultaneously without concern about wasting significant resources. For startups and smaller organisations, this approach democratises access to sophisticated AI capabilities that were previously only available to well-funded enterprises.

Simple setup integrates with various tools and workflows easily

Google Cloud's ecosystem is designed for easy integration with a broad array of development tools, SaaS applications, and data sources. The platform provides straightforward APIs, extensive documentation, and numerous tutorials that simplify the process of connecting AI agents to your existing systems. Pre-built connectors for popular applications mean you can establish working integrations without extensive custom development. The SaaS-centric approach also means updates, improvements, and new features happen automatically without requiring your team to manage deployments or maintain compatibility. For organisations without deep technical teams, this simplicity is invaluable—you can focus on defining what your AI agents should accomplish rather than wrestling with infrastructure complexity. The platform handles the underlying technical details of model serving, scaling, and maintenance, abstracting away much of the operational burden.

Concerns with using SaaS stacks for AI hosting

Possibly insufficient customisation and limited control

Public LLMs and standardised SaaS offerings are designed to serve broad audiences, which means they may not accommodate your organisation's unique requirements, industry-specific needs, or proprietary processes. You're constrained to the capabilities these services provide—custom model fine-tuning, specialised deployment configurations, or unique integration requirements may be difficult or impossible to implement. If your AI agents need to understand industry-specific terminology, follow proprietary business logic, or integrate deeply with custom internal systems, the limitations of public models and standard SaaS tools become apparent. Your organisation's competitive differentiation may suffer when using the same AI capabilities available to all your competitors. As your AI agent needs mature and become more sophisticated, you may find yourself constrained by platform limitations that require costly migration to more flexible infrastructure.

Increased risk on sensitive applications due to security gaps

Utilising public LLMs means your data and queries pass through Google's systems, potentially exposing sensitive business information, customer data, or proprietary knowledge to external infrastructure. While Google implements strong security measures, the shared nature of public models and the SaaS delivery model create risks that dedicated or private infrastructure avoids. Data transmitted to public LLMs might be logged, potentially used for model improvement, or inadvertently included in responses to other users if not properly isolated. For organisations handling regulated data, confidential business information, or operating in security-conscious industries, these risks may be unacceptable. The standardised security posture of SaaS tools may not align with your specific security requirements, leaving gaps in your security architecture. Compliance frameworks often struggle with public cloud and SaaS services where data processing and storage locations can be difficult to track and control.

Dependence on external services could expose unexpected complexity

While initially simple, deep reliance on Google Cloud, SaaS tools, and public LLMs creates dependencies that can introduce unexpected challenges. Service outages at Google or your SaaS providers directly impact your AI agent availability with no alternative infrastructure to provide continuity. Price changes, service discontinuations, or modifications to terms of service affect your operations without your input or control. The simplicity of initial setup can mask underlying complexity that emerges as your AI agent deployment scales—rate limits, API restrictions, or unexpected performance characteristics that weren't apparent during experimentation. Troubleshooting issues often requires working through external support channels rather than direct access to infrastructure and logs. As your requirements grow, the clean simplicity of the initial SaaS approach can evolve into a complex web of tool integrations, API management, and service dependencies that become difficult to understand and optimise.

Potential issues in compliance with Australian Privacy Principles (APP)

For Australian organisations, using Google Cloud SaaS solutions with public LLMs introduces specific compliance challenges under the Australian Privacy Principles, particularly concerning APP 8 (Cross Border Disclosure of Personal Information) and APP 1 (Open and Transparent Management of Personal Information). When AI agents process data through global SaaS platforms and public language models, personal information can be transferred across regional and international boundaries to data centres worldwide, raising critical questions about data sovereignty and cross-border disclosure obligations. Australian organisations remain accountable for ensuring that overseas recipients handle personal information in accordance with the APPs, even when using third-party cloud services. The challenge intensifies because public LLMs may process Australian customer data on infrastructure located in multiple jurisdictions, making it difficult to maintain clear visibility and control over exactly where sensitive information resides and how it's handled. Organisations must ensure they have appropriate contractual arrangements with cloud providers that include robust confidentiality clauses and guarantees about data handling practices. Additionally, the shared responsibility model means that while Google provides compliant infrastructure, Australian organisations retain ultimate responsibility for their own APP compliance, including implementing appropriate consent mechanisms, maintaining privacy policies, and ensuring their use of AI agents aligns with the purposes for which personal information was originally collected. For organisations handling sensitive data—particularly in healthcare, finance, or government sectors—the limited control over data flows and processing locations in public SaaS and LLM environments may create compliance gaps that require additional safeguards, detailed privacy impact assessments, and potentially alternative hosting approaches that provide greater transparency and control over Australian personal information.

Option 2: Major Public Cloud Providers (Azure, AWS)

In this option you choose one of the major public cloud providers and use their technology stack to construct your AI agents. Major public cloud providers like Microsoft Azure and Amazon Web Services have established themselves as the dominant forces in cloud infrastructure, offering enterprise-grade solutions that power countless organisations worldwide. These platforms provide the foundation for AI agent hosting through their comprehensive service ecosystems, combining decades of infrastructure expertise with cutting-edge AI capabilities. Their global presence and continuous innovation make them attractive choices for organisations seeking proven, reliable cloud environments.

Benefits:

- Extensive scalability and robust infrastructure suitable for virtually any scale

- Integration capacity with existing software and platforms

- Immediate access to high-performing AI models and diverse tools

Concerns:

- Potential exposure to global security breaches and privacy risks

- Limited control over data storage locations and compliance

- Risk of vendor lock-in issues and escalating hidden costs

Benefits of using Public Cloud Providers for hosting your AI

Extensive scalability and robust infrastructure suitable for virtually any scale

One of the most compelling advantages of major public cloud providers is their virtually unlimited scalability. These platforms are designed to accommodate everything from small startups running experimental AI models to multinational corporations deploying enterprise-wide AI agent networks serving millions of users. The infrastructure is built on redundant, globally distributed data centres that ensure high availability and performance. When your AI agent workload suddenly spikes—perhaps due to seasonal demand or unexpected usage patterns—these platforms can automatically provision additional resources within minutes, ensuring your applications remain responsive. This elasticity means you're never constrained by physical infrastructure limitations, and you can scale down just as easily during quieter periods, optimising your resource utilisation and costs.

Integration capacity with existing software and platforms

Major cloud providers excel at integration, offering pre-built connectors and APIs for virtually every popular enterprise software platform and development framework. Whether you're working with customer relationship management systems, enterprise resource planning software, data warehouses, or specialised industry applications, you'll find native integration options that dramatically reduce development time and complexity. For AI agents specifically, these platforms provide seamless connections to data sources, authentication systems, and business intelligence tools. This integration ecosystem means your AI agents can easily access the data they need, interact with existing workflows, and deliver insights directly into the tools your team already uses. The comprehensive marketplace of third-party integrations further extends these capabilities, allowing you to build sophisticated AI solutions without reinventing the wheel.

Immediate access to high-performing AI models and diverse tools

These major providers invest billions in AI research and development, making state-of-the-art models and tools readily available to their customers. You gain immediate access to pre-trained large language models, computer vision systems, natural language processing capabilities, and machine learning frameworks that would take years and enormous resources to develop independently. The platforms offer managed services that handle the complex infrastructure requirements of running advanced AI models, including GPU allocation, model serving, and optimisation. Additionally, you benefit from continuous improvements as these providers update their AI offerings with the latest breakthroughs in the field. This access to cutting-edge technology democratises AI development, allowing organisations of all sizes to deploy sophisticated AI agents without building foundational capabilities from scratch.

Concerns with using Public Cloud Provides for AI hosting

Potential exposure to global security breaches and privacy risks

The massive scale and high profile of major public cloud providers make them attractive targets for sophisticated cybercriminals and state-sponsored actors. While these companies invest heavily in security, their shared infrastructure model means that a vulnerability discovered in one part of their system could potentially affect multiple customers. When security incidents occur at this scale, they tend to make headlines and affect thousands of organisations simultaneously. Additionally, the multi-tenant nature of public clouds means your AI agents and data reside in environments shared with countless other organisations, creating theoretical attack vectors that don't exist in isolated environments. For organisations handling highly sensitive data—such as healthcare records, financial information, or proprietary research—this shared infrastructure model introduces risks that must be carefully evaluated and mitigated through additional security layers.

Limited control over data storage locations and compliance

Despite offering region selection options, major public cloud providers ultimately control where and how your data is physically stored and processed. For AI agents processing sensitive information, this limitation becomes particularly problematic when dealing with data sovereignty requirements, such as GDPR's stipulations about EU citizen data or industry-specific regulations about financial or healthcare information. While these providers offer compliance certifications, the underlying infrastructure decisions remain in their hands, and changes to data routing or storage policies may occur with limited notice. Organisations operating in highly regulated industries or multiple jurisdictions often struggle to maintain continuous compliance visibility, as the complexity of these global platforms can obscure exactly where data traverses and resides. This lack of granular control means you must trust the provider's compliance framework rather than implementing your own end-to-end governance.

Risk of vendor lock-in issues and escalating hidden costs

Major cloud providers design their services to work optimally within their own ecosystems, creating subtle but powerful forces that increase switching costs over time. As you build AI agents using provider-specific tools, APIs, and managed services, your codebase becomes increasingly intertwined with proprietary technologies that don't easily translate to other platforms. The initial pricing may appear competitive, but costs can escalate unpredictably as your AI agent usage grows, particularly around data egress fees, API calls, and premium support services. Organisations often discover that while compute costs are transparent, the numerous auxiliary services required for production AI deployments—monitoring, logging, data transfer, storage tiers, and specialised support—create a complex cost structure that's difficult to predict or optimise. Migration away from these platforms becomes increasingly expensive and technically challenging as your dependency deepens, potentially leaving you with limited negotiating power on pricing and terms.

Option 3: Niche AI-Specialised Cloud Hosting Providers

Niche AI-specialised cloud hosting providers have emerged to fill a specific gap in the market, focusing exclusively on the unique requirements of AI and machine learning workloads. Unlike general-purpose cloud providers, these companies build their entire infrastructure and service offerings around the specific needs of AI development and deployment. They understand the nuances of GPU optimisation, model serving, and the iterative nature of AI development, offering tailored solutions that eliminate many of the complexities organisations face when trying to adapt general cloud platforms to AI-specific use cases.

Benefits:

- Specialisation in AI-focused technology and personalised infrastructure solutions

- Direct support from teams focused solely on AI environments

- Enhanced flexibility in deployment scenarios compared to larger providers

Concerns:

- Possibly lower infrastructure reliability compared to significant providers

- Occasional scalability restrictions with potentially limited resources

- Operational risks due to financial stability or smaller teams

Benefits

Specialisation in AI-focused technology and personalised infrastructure solutions

These providers dedicate their entire technology stack to optimising AI workloads, resulting in infrastructure specifically tuned for the computational patterns of machine learning and AI agent operations. Rather than offering general-purpose computing that you must configure for AI use, they provide pre-optimised environments with the right GPU configurations, memory hierarchies, and storage systems designed for large model training and inference. Their platforms often include specialised features like automatic model versioning, experiment tracking, and deployment pipelines built specifically for AI workflows. This specialisation means you spend less time on infrastructure configuration and more time on developing and refining your AI agents. The personalised approach extends to custom solutions tailored to your specific AI architecture, whether you're running transformer-based language models, computer vision systems, or hybrid AI agents that combine multiple capabilities.

Direct support from teams focused solely on AI environments

When technical challenges arise, you're working with engineers and support staff who live and breathe AI infrastructure daily. These teams understand the specific pain points of AI development—from debugging GPU memory issues to optimising inference latency for real-time AI agents. Their focused expertise means faster problem resolution and more valuable guidance on architectural decisions. Rather than navigating a general support system that handles everything from web hosting to database management, you gain direct access to specialists who can immediately understand your AI-specific challenges and provide relevant solutions. This focused support often includes proactive recommendations for improving your AI agent performance, cost optimisation strategies specific to AI workloads, and early access to emerging AI infrastructure innovations that could benefit your deployment.

Enhanced flexibility in deployment scenarios compared to larger providers

Niche providers typically offer more flexible and customisable deployment options because they're not constrained by massive legacy infrastructure or standardised service offerings designed for millions of diverse customers. They can quickly adapt to emerging AI frameworks, support experimental deployment patterns, and accommodate unique architectural requirements that might not fit into the rigid service tiers of major cloud providers. This flexibility extends to pricing models, allowing for more creative arrangements that align costs with your actual usage patterns rather than forcing you into preset tiers. For organisations with specific compliance requirements, unique data processing needs, or innovative AI architectures, these providers often prove more willing and able to accommodate special requests and work collaboratively on custom solutions.

Concerns

Possibly lower infrastructure reliability compared to significant providers

Niche providers typically operate at a smaller scale with fewer data centres and less redundancy than the global infrastructure of major cloud providers. This reality can translate to higher risks of localised outages, longer recovery times, and less geographic diversity for disaster recovery scenarios. While they may achieve impressive uptime within their operational scope, they lack the vast resources to maintain the same level of redundancy across continents. If their primary data centre experiences issues, you may have fewer failover options compared to major providers with dozens of global regions. For AI agents that require 24/7 availability or serve global audiences, this infrastructure limitation could introduce unacceptable risk. Additionally, the financial resources available for infrastructure upgrades and expansions may be limited, potentially leaving you on older hardware or waiting longer for access to the latest GPU generations.

Occasional scalability restrictions with potentially limited resources

When your AI agent requirements suddenly surge—perhaps due to rapid business growth or viral adoption of your AI-powered service—niche providers may struggle to accommodate dramatic increases in resource demand. Their capacity planning is based on smaller customer bases, and they may not have the spare capacity to immediately provision hundreds of GPUs or dramatically increase your infrastructure allocation. This constraint can force you into queues for resources or require advance planning and reservation of capacity that reduces your operational flexibility. During peak demand periods when multiple customers need increased resources simultaneously, you may face competition for limited infrastructure that doesn't occur with larger providers' vast resource pools. For organisations anticipating rapid growth or unpredictable scaling requirements, these limitations could hinder business objectives or force you to maintain buffer capacity at higher cost.

Operational risks due to financial stability or smaller teams

Niche providers face business risks that don't typically concern customers of major cloud providers. These companies may be venture-funded startups still proving their business model, leaving questions about their long-term viability. If a niche provider experiences financial difficulties, gets acquired, or pivots their business strategy, your AI agent hosting could be disrupted with potentially short notice. Smaller teams also mean concentration risk—if key technical staff depart, the loss of institutional knowledge and expertise could impact service quality. The pace of innovation might slow, or critical expertise in your specific deployment architecture could disappear. While these providers often offer more personalised attention, that same small-team dynamic means they have less depth to handle multiple simultaneous issues or maintain consistent service levels during staff transitions or company changes.

Option 4: Fully Managed Private Cloud Hosting for AI Agents

Fully managed private cloud hosting represents a premium approach where a specialised provider builds and maintains dedicated infrastructure exclusively for your organisation's AI agent needs. This model combines the security and control benefits of private infrastructure with the convenience of having experts manage the complex operational details. It's particularly appealing to organisations that require high security and customisation but lack the internal expertise or resources to build and maintain private infrastructure independently.

Benefits:

- High security with dedicated, customised infrastructure exclusively for your needs

- Vendor-managed compliance, updates, and maintenance reduce internal complexity

- Tailored support from experts skilled in secure AI management

Concerns:

- Higher upfront investment and potential ongoing cost premiums

- Dependence on a single provider offering fewer flexibility options

- Potential slower turnaround on some support or maintenance tasks

Benefits

High security with dedicated, customised infrastructure exclusively for your needs

With fully managed private cloud hosting, your AI agents run on infrastructure that serves only your organisation, eliminating the shared-resource vulnerabilities inherent in multi-tenant environments. Every server, network device, and storage system is dedicated to your workloads, allowing for security configurations tailored precisely to your requirements without compromise. You can implement custom network segmentation, specialised encryption schemes, and proprietary security protocols that would be impossible in shared environments. This dedicated infrastructure means there's no risk of noisy neighbour problems affecting your AI agent performance, no possibility of data bleeding between tenants, and no sharing of physical resources with unknown entities. For organisations in highly regulated industries or those handling extremely sensitive data, this level of isolation provides peace of mind that public cloud environments cannot match. The infrastructure can be physically located in facilities of your choosing, with custom security measures from biometric access controls to airgapped networks if required.

Vendor-managed compliance, updates, and maintenance reduce internal complexity

While you gain the security benefits of private infrastructure, you avoid the operational burden of managing it yourself. The managed service provider handles all the complex, time-consuming tasks of maintaining secure, up-to-date infrastructure—from applying security patches and firmware updates to managing certificate renewals and compliance documentation. They maintain expertise in the specific compliance frameworks relevant to your industry, whether that's HIPAA for healthcare, PCI DSS for financial services, or defence industry security requirements. This expertise ensures your AI agent infrastructure remains continuously compliant without requiring you to build internal teams of infrastructure specialists. The provider typically offers detailed compliance reporting, audit trails, and documentation that simplifies your own compliance verification processes. When regulations change or new security threats emerge, the provider proactively adapts your infrastructure rather than waiting for you to identify and implement necessary changes.

Tailored support from experts skilled in secure AI management

Fully managed providers assign dedicated teams familiar with your specific infrastructure and AI agent deployments, creating a partnership rather than a transactional support relationship. These experts develop deep understanding of your unique requirements, architecture decisions, and operational patterns, enabling them to provide proactive recommendations and rapidly resolve issues. Unlike general cloud support where you explain your setup to different technicians each time, your managed provider maintains institutional knowledge about your environment. They can suggest optimisations specific to your AI workloads, identify potential issues before they impact operations, and help architect new capabilities with full context of your existing infrastructure. This level of tailored support often includes participation in your planning processes, collaborative problem-solving on complex challenges, and serving as an extension of your team for infrastructure-related decisions.

Concerns

Higher upfront investment and potential ongoing cost premiums

Fully managed private cloud hosting requires significant initial investment in dedicated hardware, infrastructure setup, and customisation to your requirements. Unlike public cloud's pay-as-you-go model, you're committing to minimum capacity levels regardless of your actual usage, which can result in paying for unused resources during lower-demand periods. The premium nature of this service means higher per-unit costs compared to shared infrastructure, as you're bearing the full cost of hardware that serves only your organisation. Ongoing management fees for the expert support and maintenance add to the total cost of ownership. For smaller organisations or those with variable AI agent workloads, the financial commitment may be difficult to justify compared to more elastic alternatives. Budget planning becomes more complex as you must forecast infrastructure needs far in advance to avoid costly mid-contract expansions or being locked into inadequate capacity.

Dependence on a single provider offering fewer flexibility options

Choosing a fully managed private cloud creates deep dependency on your provider's specific infrastructure, tools, and operational processes. Switching providers becomes extremely complex and costly because your entire infrastructure must be rebuilt elsewhere, requiring extensive migration planning and likely service disruption. You're also dependent on that provider's financial stability and strategic direction—if they exit the managed hosting business, get acquired, or change their service model, you face forced migration at potentially inconvenient times. The customisation that makes this solution attractive also reduces flexibility, as changes to your infrastructure must go through the provider's change management processes rather than being implemented immediately. You may find yourself constrained by their hardware refresh cycles, technology choices, and service roadmap rather than being able to quickly adopt new technologies or pivot to different infrastructure approaches as your AI strategy evolves.

Potential slower turnaround on some support or maintenance tasks

While you gain expert management, you sacrifice some of the immediate control that comes with either public cloud self-service or internal infrastructure management. Even routine changes may require submitting requests to your managed provider, going through their change control processes, and waiting for scheduling according to their operational procedures. Emergency changes outside business hours might incur premium fees or face delays depending on your service level agreement terms. This intermediary layer can slow down innovation and iteration cycles, particularly frustrating for AI development teams accustomed to rapid experimentation. During critical situations requiring immediate infrastructure changes, you're dependent on the provider's response times and available resources rather than being able to take direct action. The balance between thorough, professional management and operational agility must be carefully negotiated in service contracts.

Option 5: Hybrid Cloud Hosting

Hybrid cloud hosting combines private and public cloud infrastructure into a unified environment, allowing organisations to strategically distribute their AI agent workloads based on security requirements, performance needs, and cost considerations. This approach acknowledges that different aspects of AI operations have different requirements—some data and processing must remain private, while other workloads can benefit from public cloud elasticity and cost-effectiveness. When properly implemented, hybrid cloud provides the best of both worlds, though it introduces its own complexity challenges.

Benefits:

- Optimal blend of flexibility, cost-efficiency, and security

- Enhanced operational resiliency with diverse environments

- Ability to retain sensitive data locally while scaling other services publicly

Concerns:

- Complexity in managing multi-cloud integrations and interoperability

- Requires robust internal capabilities for effective management

- Potential increased security risks from interactions between private/public clouds

Benefits

Optimal blend of flexibility, cost-efficiency, and security

Hybrid cloud enables sophisticated workload placement strategies where you position each component of your AI agent infrastructure in the most appropriate environment. Highly sensitive data and core AI models can reside in your private infrastructure with maximum security controls, while less sensitive workloads like development environments, testing, or burst capacity needs leverage cost-effective public cloud resources. This strategic distribution allows you to optimise spending by avoiding overprovisioning private infrastructure for peak loads that rarely occur, instead using public cloud for temporary scaling. You gain flexibility to shift workloads between environments as requirements change, moving from experimental public cloud deployment to production private hosting as AI agents mature. The hybrid approach also provides financial flexibility, allowing you to balance capital expenditure on private infrastructure with operational expenditure in public cloud based on your financial preferences and constraints.

Enhanced operational resiliency with diverse environments

Operating across multiple infrastructure environments provides inherent redundancy that protects against catastrophic failures. If your private infrastructure experiences issues, critical AI agent services can failover to public cloud, maintaining business continuity. Conversely, public cloud outages don't completely halt operations as you retain core capabilities in your private environment. This diversification extends beyond disaster recovery—different environments provide testing grounds for new technologies and deployment approaches without risking your primary infrastructure. You can validate new AI models, experiment with different hosting configurations, or test infrastructure changes in one environment before rolling them out broadly. The architectural resilience of hybrid cloud also protects against vendor issues, regulatory changes, or strategic pivots that might affect a single environment. By maintaining operational capability across diverse infrastructure, you reduce single points of failure and increase your organisation's overall resilience.

Ability to retain sensitive data locally while scaling other services publicly

Hybrid cloud excels at addressing data sovereignty and privacy requirements while maintaining operational flexibility for less sensitive workloads. Your most confidential data—customer personal information, proprietary algorithms, or regulated health records—never leaves your controlled private environment, yet your AI agents can still leverage public cloud capabilities for appropriate tasks. This segregation allows you to meet strict regulatory requirements for data residency while avoiding the costs and limitations of hosting everything privately. For example, your AI agents might process sensitive customer queries using models hosted privately while leveraging public cloud for data analytics on anonymised datasets or for serving less sensitive customer interactions. This approach provides clear audit trails showing exactly where different data types reside and process, simplifying compliance verification and giving stakeholders confidence that sensitive information receives appropriate protection.

Concerns

Complexity in managing multi-cloud integrations and interoperability

Operating hybrid cloud environments requires connecting diverse infrastructure with different APIs, management interfaces, security models, and operational procedures. Ensuring seamless communication between private and public components demands sophisticated networking, consistent identity management across environments, and careful orchestration of workloads spanning multiple platforms. Your AI agents may need to access data residing in different clouds, requiring complex data synchronisation strategies and careful latency management to maintain performance. Each environment requires its own monitoring, logging, and alerting systems, which must then be aggregated for unified visibility. Updates and changes must be coordinated across platforms to maintain compatibility and avoid breaking integrations. The technical complexity of maintaining consistent security policies, network configurations, and operational procedures across diverse environments significantly increases the difficulty of infrastructure management compared to single-platform deployments.

Requires robust internal capabilities for effective management

Successfully operating hybrid cloud demands sophisticated internal expertise spanning multiple infrastructure platforms, networking technologies, and cloud architectures. Your team must understand the nuances of both private infrastructure management and public cloud operations, along with the specialised knowledge of integrating them effectively. This requirement typically means hiring or developing staff with diverse skill sets and maintaining expertise across multiple technology stacks, increasing your personnel costs and training requirements. The complexity of troubleshooting issues that span environments requires engineers comfortable working at multiple infrastructure layers and across different vendor platforms. Smaller organisations often struggle to maintain the breadth and depth of expertise necessary for effective hybrid cloud operations, potentially leading to sub-optimal configurations, security gaps, or costly operational mistakes. Without strong internal capabilities, the theoretical benefits of hybrid cloud may fail to materialise as infrastructure becomes an operational burden rather than an enabling platform.

Potential increased security risks from interactions between private/public clouds

The integration points between private and public cloud components create potential vulnerability surfaces that don't exist in single-environment deployments. Each connection represents a pathway that must be secured, monitored, and maintained, expanding your attack surface. Data moving between environments could be intercepted if not properly encrypted and secured, and authentication mechanisms spanning multiple clouds create additional potential weak points. Misconfigurations at the boundaries between environments could inadvertently expose private resources to public access or create backdoor access paths into secured systems. The complexity of hybrid security models increases the likelihood of human error, as security teams must maintain consistent policies across fundamentally different platforms with different security paradigms. If your private and public environments don't maintain equivalent security standards, the entire hybrid deployment becomes only as secure as its weakest component, potentially negating the security benefits you sought from private infrastructure.

Option 6: On-Premises Private Infrastructure

On-premises private infrastructure represents the traditional approach of building and operating dedicated data centres or server rooms entirely within your own facilities. For AI agent hosting, this means purchasing and maintaining all the hardware, networking, storage, and supporting systems required to run your AI workloads, with everything physically located in spaces you control. This approach offers maximum control and isolation but demands substantial resources and expertise to implement effectively.

Benefits:

- Total control over data security and compliance adherence

- Seamless customisation and immediate responsiveness to specific business needs

- The highest assurance of data privacy and protection from external vulnerabilities

Concerns:

- Significant initial equipment, infrastructure, and personnel investment

- In-house maintenance complexities leading to staffing challenges

- Reduced ability to scale quickly and stay at the forefront of AI advancements

Benefits

Total control over data security and compliance adherence

With on-premises infrastructure, you maintain complete sovereignty over every aspect of your AI agent environment—from physical security of the facilities to the finest details of network configuration and data handling procedures. No external parties have access to your infrastructure without your explicit permission, eliminating concerns about third-party vulnerabilities or cloud provider security incidents. You can implement precisely the security measures your organisation requires, including airgapped networks, custom encryption schemes, and proprietary security protocols that would be impossible in any shared or external environment. For organisations handling classified information, dealing with extreme privacy requirements, or operating in industries with stringent regulatory demands, this level of control provides certainty that no external infrastructure or management model can match. Compliance becomes more straightforward in some respects because you can demonstrate complete chain of custody for data and point to physical security measures that auditors can inspect directly.

Seamless customisation and immediate responsiveness to specific business needs

Operating your own infrastructure means there's no intermediary between your requirements and implementation—your team can make changes immediately without submitting requests to external providers or working within the constraints of standardised service offerings. Hardware choices, network architecture, storage configurations, and software deployments can be optimised precisely for your AI agent workloads without compromise. When business needs change, you can rapidly reconfigure infrastructure rather than waiting for a vendor's change control process or working around external limitations. This immediate responsiveness is particularly valuable during critical situations when rapid infrastructure adaptation could make the difference between success and failure. Custom integrations with proprietary systems, unique security requirements, or experimental AI architectures can be implemented exactly as designed without adapting to a provider's platform constraints.

The highest assurance of data privacy and protection from external vulnerabilities

On-premises infrastructure provides absolute certainty about data residency—you know exactly where every piece of data resides because it's in facilities you control. There's no risk of data inadvertently crossing international boundaries, no concerns about cloud providers' data handling practices, and no possibility of your information commingling with other organisations' data in shared systems. External threats must first breach your physical and network perimeter security before reaching your AI agents and data, providing defence-in-depth that's clearly bounded. For organisations handling trade secrets, conducting sensitive research, or operating in adversarial environments, the peace of mind from knowing that critical data never leaves controlled facilities can justify the substantial costs and complexity of on-premises operations. The complete isolation from external infrastructure also means immunity from widespread cloud outages or global security incidents that simultaneously affect thousands of organisations.

Concerns

Significant initial equipment, infrastructure, and personnel investment

Building on-premises infrastructure for AI agents requires enormous upfront capital investment in servers, GPUs, networking equipment, storage systems, power and cooling infrastructure, and physical security systems. For AI workloads specifically, the cost of high-performance GPUs and the supporting infrastructure to power and cool them represents a substantial investment that must be made before hosting a single AI agent. Beyond hardware, you need facilities with adequate power, cooling, and physical security, which might mean constructing or significantly renovating existing spaces. The personnel investment is equally substantial—you must hire or train teams to handle infrastructure planning, deployment, ongoing management, security, and maintenance across multiple specialities. For smaller organisations or those just beginning AI initiatives, this upfront investment creates a significant barrier and may not be financially justifiable given the uncertainty about future AI workload requirements.

In-house maintenance complexities leading to staffing challenges

Operating on-premises infrastructure demands 24/7 attention, as hardware failures, security incidents, and performance issues don't respect business hours. You need staff with diverse expertise spanning data centre operations, network engineering, systems administration, security operations, and AI infrastructure specialisation—a combination of skills that's difficult and expensive to assemble and retain. When hardware fails at 2 AM or a critical security patch must be applied immediately, your team must respond rather than relying on a vendor's managed service. Staff vacations, illnesses, or departures create coverage challenges that organisations with small teams struggle to address. The rapid evolution of AI technology means continuous learning investments to keep your team current with emerging best practices, new hardware capabilities, and evolving security threats. Finding and retaining staff with both traditional infrastructure expertise and modern AI specialisation remains extremely challenging in today's competitive talent market.

Reduced ability to scale quickly and stay at the forefront of AI advancements

On-premises scaling requires procurement, delivery, installation, and configuration of physical hardware—a process measured in weeks or months rather than the minutes required to scale in cloud environments. This latency in scaling creates challenges for organisations with unpredictable AI workload growth or those needing to rapidly experiment with resource-intensive AI models. Hardware refresh cycles that make economic sense for on-premises operations typically span 3-5 years, meaning you may operate on older technology while cloud providers offer access to the latest GPUs and AI accelerators as soon as they're available. The pace of AI advancement means infrastructure optimised for today's models may be sub-optimal for next generation architectures, yet you're committed to the hardware you've purchased. When new AI frameworks, deployment patterns, or infrastructure approaches emerge, adapting on-premises infrastructure requires careful planning and potentially significant investment, whereas cloud providers absorb these transitions and offer new capabilities as managed services.

Expertise and Support

DDSN Interactive can support you in selecting and implementing the right AI hosting agent, ensuring security, scalability, agility, and compliance suited to your organisation's needs.

Contact us today for a personalised consultation

Key Decision Factors for Choosing AI hosting

Selecting the optimal hosting solution for your AI agents requires careful evaluation of several critical considerations that directly impact your operational success, security posture, and long-term flexibility.

Security & Compliance

How sensitive is your data, and what regulatory frameworks govern your operations? Highly regulated industries like healthcare, finance, or government may require dedicated private infrastructure with complete control over data residency and security measures. Less sensitive applications might operate acceptably on public cloud with appropriate safeguards. Consider both current compliance requirements and potential future regulations that could affect your infrastructure choices.

Cost-Effectiveness

Beyond initial pricing, evaluate total cost of ownership including management overhead, staffing requirements, scalability costs, and potential hidden fees. Public cloud appears inexpensive initially but can become costly at scale with data transfer fees and premium services. Private infrastructure requires substantial upfront investment but may prove more economical long-term for predictable, large-scale AI workloads. Balance financial constraints against operational requirements to find sustainable solutions.

Scalability & Flexibility

How quickly do your AI agent requirements change, and can you accurately predict future capacity needs? Organisations with unpredictable growth or experimental AI initiatives benefit from cloud elasticity, while those with stable, predictable workloads might optimise costs through dedicated infrastructure. Consider both rapid scaling for growth and the flexibility to scale down during slower periods without financial penalty.

Integration & Management Overhead

Assess your team's technical capabilities and capacity to manage infrastructure complexity. Simple SaaS and public cloud solutions reduce management burden but offer less customisation. Private and hybrid solutions provide control but demand significant expertise and ongoing attention. Honest evaluation of your organisational capabilities prevents selecting solutions that exceed your team's ability to operate effectively.

Level of Control & Vendor Reliance

Determine how much control you require over infrastructure decisions, security implementations, and operational procedures. Complete control through on-premises infrastructure comes with maximum responsibility and cost. Cloud services trade control for convenience and reduced operational burden. Assess vendor risks including financial stability, strategic alignment, and potential lock-in that could constrain future flexibility.

FAQ - Frequently Asked Questions

AI agent hosting refers to dedicating infrastructure—such as cloud, hybrid, or on-premises solutions—to deploy, manage, and securely run artificial intelligence agents and large language models (LLMs). It involves ensuring your AI components have sufficient resources, security, reliability, and scalability tailored to your business needs.

Privacy in AI agent hosting is critical because AI models frequently process sensitive data. Secure AI private hosting protects your organisation from data leaks, cybersecurity threats, regulatory fines, and reputational damage, ensuring compliance with stringent standards like GDPR and regional data sovereignty laws.

The best AI agent hosting solution depends on your unique needs such as budget, scalability demands, privacy considerations, and internal resources. Fully managed private clouds are ideal for strict compliance requirements, public clouds offer easy deployment, hybrid clouds balance security and cost, and on-premises setups deliver maximum privacy and control.

AI agent hosting costs vary significantly based on the scale, the hosting environment type (public, hybrid, private, or on-premises), and provider. Public cloud solutions typically begin affordably but can scale costs rapidly, while dedicated or on-premises solutions carry higher initial investment but clear long-term cost predictability.

Switching AI agent hosting providers can involve complications such as vendor lock-in challenges, data migration complexities, and possible downtime. However, selecting modular, flexible hosting solutions and working with experienced providers can significantly ease this transition and minimise disruption.